Latest Posts

How to Play Unblocked Games at School: Best Advice

Games that are unblocked are complimentary and created to enable schoolchildren to enjoy their recess to the fullest. Nonetheless, numerous educational institutions use firewalls and...

What is Residential Proxies & How Does it Work?

In the world of online safety and concealment, residential proxies are an essential resource for numerous applications. What sets them apart is the use of...

RemoTasks Login: Simplify Remote Work!

Engaging in remote work offers its advantages, particularly for those who need employment but have a full schedule. If this describes you, you might want...

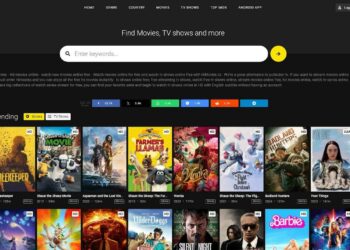

What is HiMovies? Is Hi Movies Safe to Use?

In a rapidly evolving digital landscape, especially within the realm of internet streaming services, HiMovies has become a prominent figure, attracting the interest of film fans across...

What Does The iPhone “Notify Anyway” Mean?

Updates to iOS regularly introduce new features that individuals continuously find out about on a daily basis. Notify Anyway It is among these options, initially accessible in...

LuckyCrush: Is it Safe to Use Lucky Crush With Other People?

Excitement and the pursuit of exhilaration are key motivations behind people's actions in their everyday lives. For those who are more introverted, striking up a...

Applob: Download Applob App for Android & iOS

Applob: In the modern era, marked by the digital revolution, where smartphones are a crucial component of our daily existence, the importance of streamlined and...

What Is TBG95? How To Play Unblocked Games

Are you looking for something fun to do during the school break? TBG95 might be the best choice for you because it has many games...

XCV Panel: Redefining Solar Energy Generation

For a considerable time, solar energy has been celebrated as a green and inexhaustible energy option, serving as a substitute for conventional fossil fuel-derived energy....

VSCO People Search: Find The Visual Society

Photography, which used to be a field reserved for professionals, has now become accessible to all. Advances in technology, the widespread use of smartphones, and...

Send a Snap with the Cartoon Face Lens: Snapchat Funny Filters

Currently, exchanging snaps and keeping up with snap streaks are all the rage. Yet, to elevate your ordinary snaps to a more captivating level, consider...

What is BFlix? Best BFlix.to Alternatives to Watch Movies

BFlix: Watching free online movies is easy and cheap to catch up on your favorite movies and TV shows without leaving your house. You can...

Score808: Live Sport Matches and Alternatives

Sports enthusiasts have consistently faced the challenge of locating a suitable streaming service Like Score808 that provides complimentary access to sports broadcast links. But now...

Proxyium: Your Partner in Protecting Online Privacy

In today's technologically advanced era, where our actions are monitored and information is perpetually gathered, maintaining internet confidentiality has become incredibly important. Proxyium, an intelligent...

GenYouTube: Download YouTube Videos, Photos And MP3

Using the online app GenYouTube, you may download YouTube videos in different formats to an offline device from the internet. An online app using browser...